"No one knows right now what the right reference architectures or use cases are for their institution. A lot of people are pretending that they know. But there's no playbook to pull from. Companies chasing AI have gotten ahead of themselves without understanding the underlying problems and limitations of AI-generated code and content."

"From the large language model perspective, people aren't really addressing the fallibility of the underlying text. If you built an AI system from first principles, it would look drastically different from what's offered today. Code can look right and pass unit tests and still be wrong, requiring benchmark tests to properly measure performance."

"The first step for organizations considering AI is experimenting and iterating in a feedback loop. A lot of these companies haven't engaged in a proper feedback loop to see what's actually working. AI still doesn't work very well, even within coding applications."

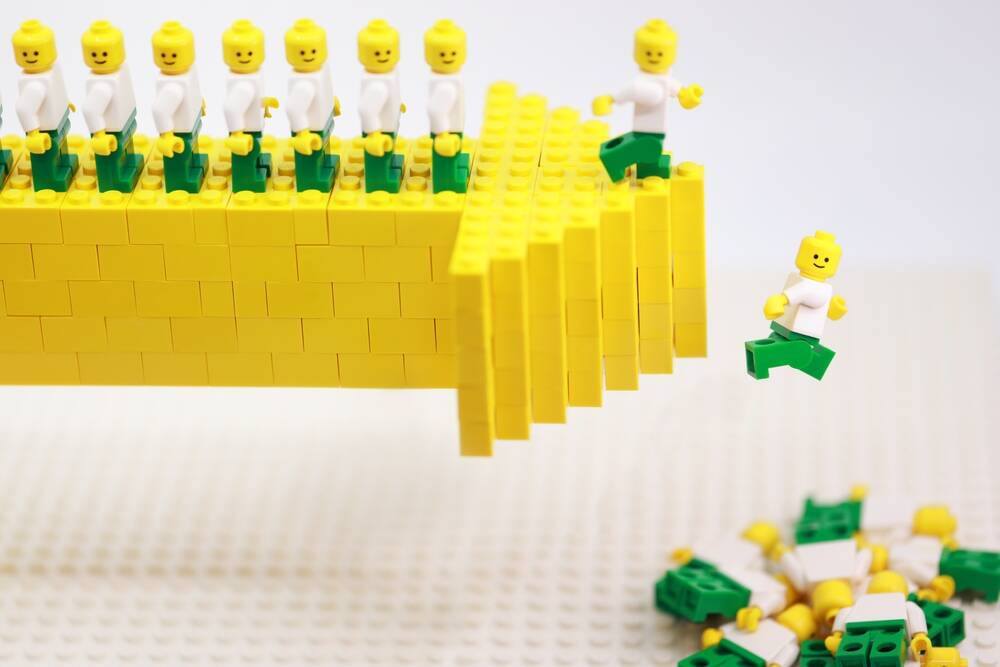

Enterprise organizations are struggling to establish effective AI strategies because no established playbook exists for implementation. Many companies have moved too quickly without addressing fundamental issues, particularly the fallibility of AI-generated content and code. AI systems today often appear functional but contain hidden errors that unit tests miss, requiring benchmark testing to identify. Organizations need to establish proper feedback loops and experimentation phases before scaling AI adoption. Contrary to predictions about workforce elimination, companies recognize the continued need for skilled professionals. The lack of clear metrics and measurement frameworks prevents organizations from accurately assessing AI system performance and reliability.

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]