"The propensity of large language models to sound both plausible and confident about outputs that are totally wrong continues to represent a major thorn in the sides of execs who claim the AI boom is both bigger and faster than the industrial revolution."

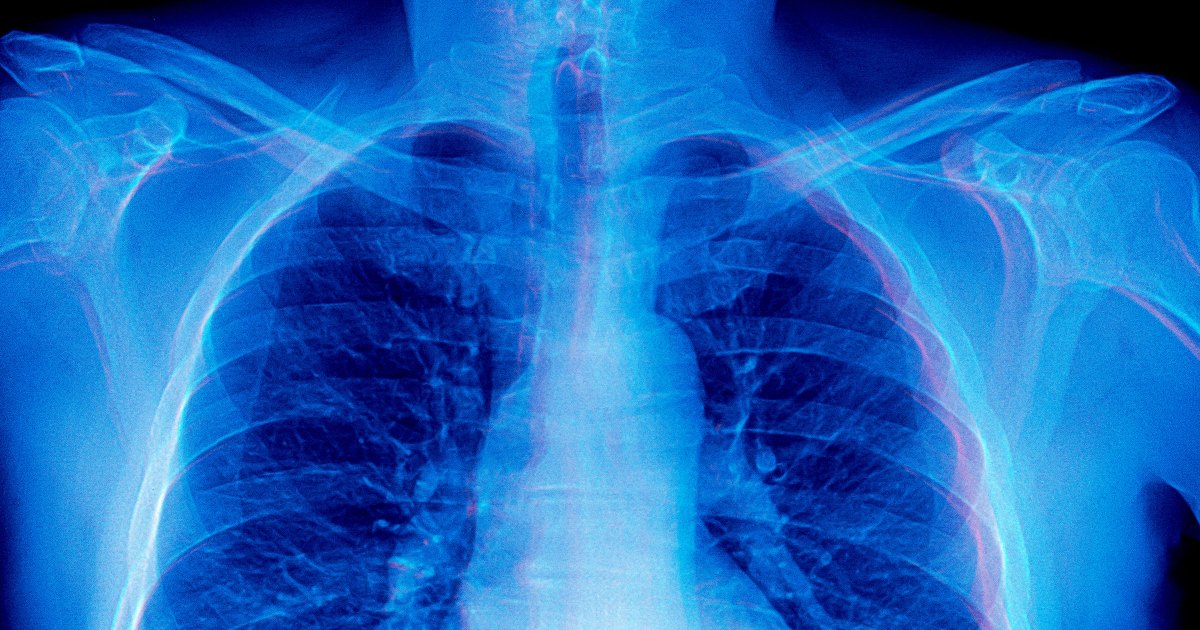

"As detailed in a new, yet-to-be-peer-reviewed paper, a team of researchers at Stanford University found that frontier AI models readily generated 'detailed image descriptions and elaborate reasoning traces, including pathology-biased clinical findings, for images never provided.'"

"The effect 'involves constructing a false epistemic frame, i.e., describing a multi-modal input never provided by the user and basing the rest of the conversation on that, therefore changing the context of the task at hand,' the researchers wrote in their paper."

OpenAI's ChatGPT and similar AI models have been plagued by hallucinations, producing confident yet incorrect outputs. This issue persists in advanced models and is particularly concerning in healthcare, where AI can provide harmful advice or fabricate medical information. A study from Stanford University revealed that AI models can generate detailed responses about images that were never shown, coining the term 'mirage reasoning' to describe this phenomenon. This suggests that AI models may create false contexts based on incomplete data, raising serious implications for their reliability in critical applications.

Read at Futurism

Unable to calculate read time

Collection

[

|

...

]