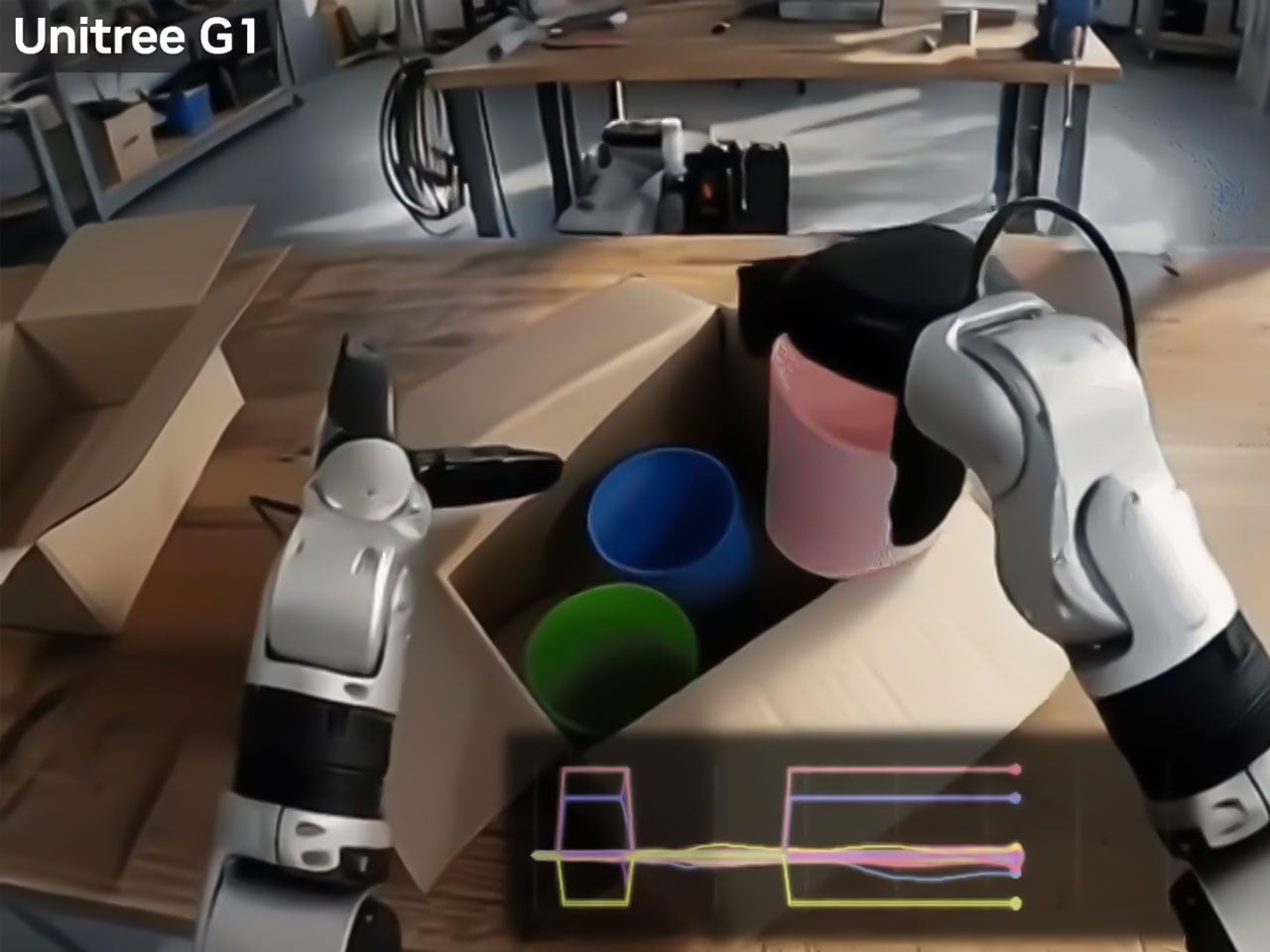

"The robotics industry, for now, faces the biggest challenge in teaching robots to operate in the messy real world. The unstructured environment means robots need massive amounts of data to learn. Gathering and structuring that data is the costliest thing in robotics and perhaps the biggest impediment, slowing the entire development process."

"NVIDIA believes it has created a workaround. The company has released DreamDojo, an open-source "world model," which intends to help robots learn intuitive physics to interact in the physical world by seeing humans do it first. So, instead of relying on painstaking programming or teleoperating robots, Nvidia DreamDojo would allow robots to train on 44,000 hours of egocentric human video."

"The dataset is called DreamDojo-HV (Human Video) and comprises exactly 44,711 hours of footage, which includes 6,015 unique tasks and more than a million trajectories. This works in two independent phases and is billed by Nvidia to be 15 times larger and about 96 times more skill-packed. It is also believed to include 2000 times more scenes than ever seen in the previous largest datasets."

Humanoid robots showcased at CES 2026 performing household tasks signal imminent adoption in homes. The robotics industry's primary obstacle is teaching robots to operate in unstructured, real-world environments, which requires massive amounts of costly data collection and structuring. NVIDIA introduced DreamDojo, an open-source world model enabling robots to learn intuitive physics by observing human behavior. The DreamDojo-HV dataset contains 44,711 hours of egocentric human video covering 6,015 unique tasks and over one million trajectories. This dataset is 15 times larger, 96 times more skill-packed, and contains 2,000 times more scenes than previous world model training datasets. By leveraging abundant human video instead of robot-specific data, NVIDIA aims to reduce costs and accelerate development for humanoid robotics companies.

Read at Yanko Design - Modern Industrial Design News

Unable to calculate read time

Collection

[

|

...

]