"OpenAI has tuned the model to be more skeptical, so it's more likely to tell you when something is a bad drug target. The former was defined as being able to work through complex, multi-step processes, while the latter was derived from the model's performance on a handful of benchmarks."

"It's unclear whether OpenAI has tackled the hallucination issue that has plagued a variety of LLMs and can also strike when the systems are prompted to explain the steps the company took to reach its conclusions."

"The company is limiting access due to concerns about the model's potential for harmful outputs if asked to do something like optimize a virus's infectivity."

"Until we start hearing reports on the effectiveness of this new model, it's difficult to evaluate whether this focus improves its utility."

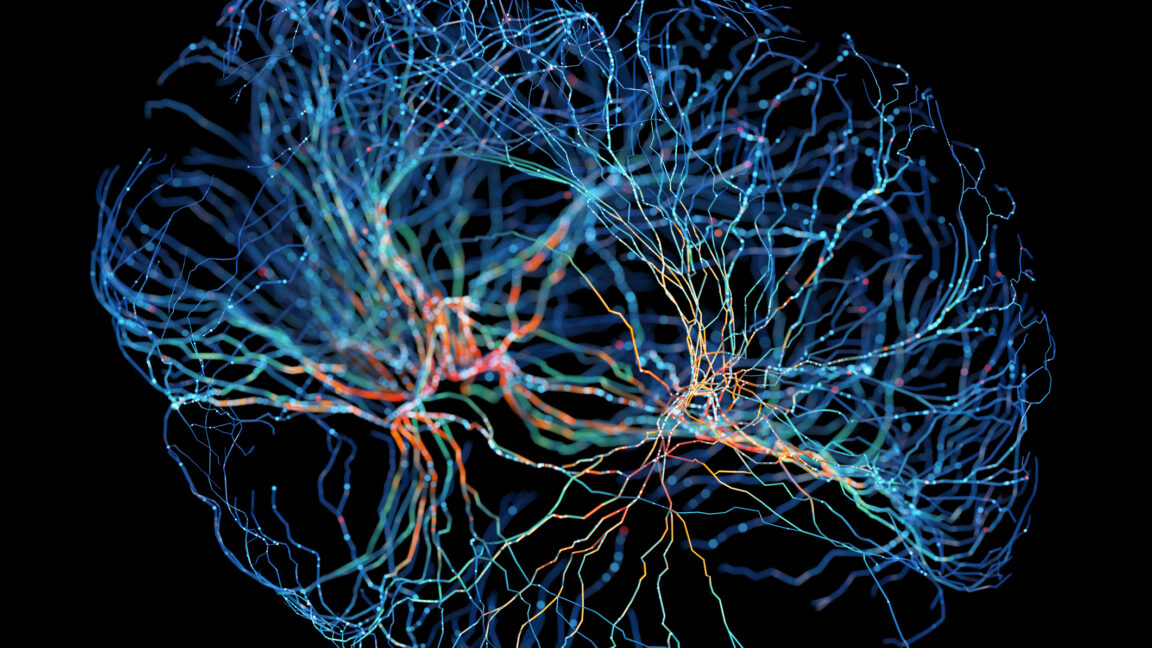

OpenAI has adjusted GPT-Rosalind to reduce sycophancy and overenthusiasm, making it more skeptical about drug targets. The model's reasoning and expert-level abilities are based on its performance in benchmarks. However, the issue of hallucinations persists, especially when explaining its conclusions. Access is currently limited to US-based entities due to potential harmful outputs. A more restricted Life Sciences Research Plugin will be available. Other companies have released science-focused LLMs, but GPT-Rosalind's biology-specific focus raises questions about its utility until effectiveness reports emerge.

Read at Ars Technica

Unable to calculate read time

Collection

[

|

...

]