Privacy professionals

fromFortune

10 hours agoAI is already helping people plan mass shootings. The law is barely paying attention | Fortune

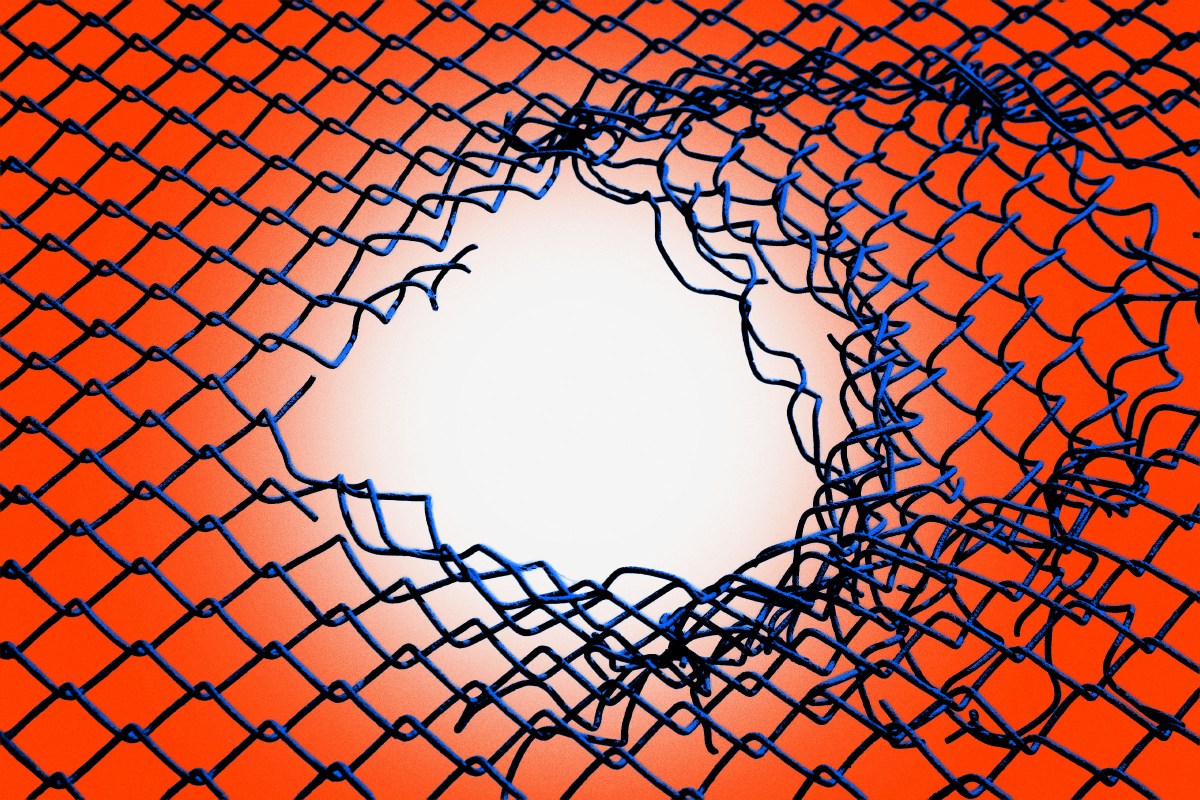

Generative AI incidents raise legal questions about whether companies must warn authorities and whether failure to intervene can constitute negligence.