#reinforcement-systems

#reinforcement-systems

[ follow ]

#ai #artificial-intelligence #ai-agents #technology #ai-in-education #openai #machine-learning #decision-making

#ai-in-education

Online learning

fromeLearning Industry

5 days agoRethinking Education With AI: Create More Engaging Learning Experiences With AI-Powered Learning Design

AI can enhance learning design by personalizing experiences and improving relevance, but risks of generic content and diminished critical thinking remain.

Mindfulness

fromSilicon Canals

2 days agoPsychology says people who set an alarm but always wake up five minutes before it goes off aren't light sleepers - they're people whose body never fully trusts that anything external will show up when it's supposed to, so their nervous system runs its own backup system just in case, and that five-minute head start on the day isn't a habit, it's a person who learned very early that depending on something outside yourself to wake you up is a risk their body isn't willing to take - Silicon Canals

The body wakes up before alarms due to a lack of trust in external cues, reflecting deeper psychological patterns of self-reliance.

Relationships

fromFortune

2 days agoTeen boys are dating their AI chatbots-and experts warn opting out of real relationships could hurt their careers in the future | Fortune

Gen Alpha prefers AI relationships for control and ease, risking essential social skills needed for real-life interactions and future careers.

#roblox

Software development

fromTNW | Artificial-Intelligence

3 days agoRoblox AI assistant gets agentic tools to plan, build, and self-test games

Roblox is enhancing its AI assistant with capabilities for planning, procedural generation, and self-correction, transforming it into a junior development partner.

fromFuturism

6 days agoVideo Shows Humanoid Robot Chasing a Pack of Wild Boars

A customized Unitree G1 robot can be seen chasing a small flock of wild boars through an empty car parking lot in Warsaw, Poland. The widely disseminated footage shows the robot jogging across a small patch of grass while chasing down the wild animals, only to raise its fist in the air in frustration after they successfully get away.

Pets

Artificial intelligence

fromTechCrunch

3 days agoPhysical Intelligence, a hot robotics startup, says its new robot brain can figure out tasks it was never taught | TechCrunch

Physical Intelligence's π0.7 model enables robots to perform unfamiliar tasks through compositional generalization, marking a significant advancement in robotic AI capabilities.

fromPsychology Today

2 months agoArtificial Intelligence and In Extremis Decision-Making

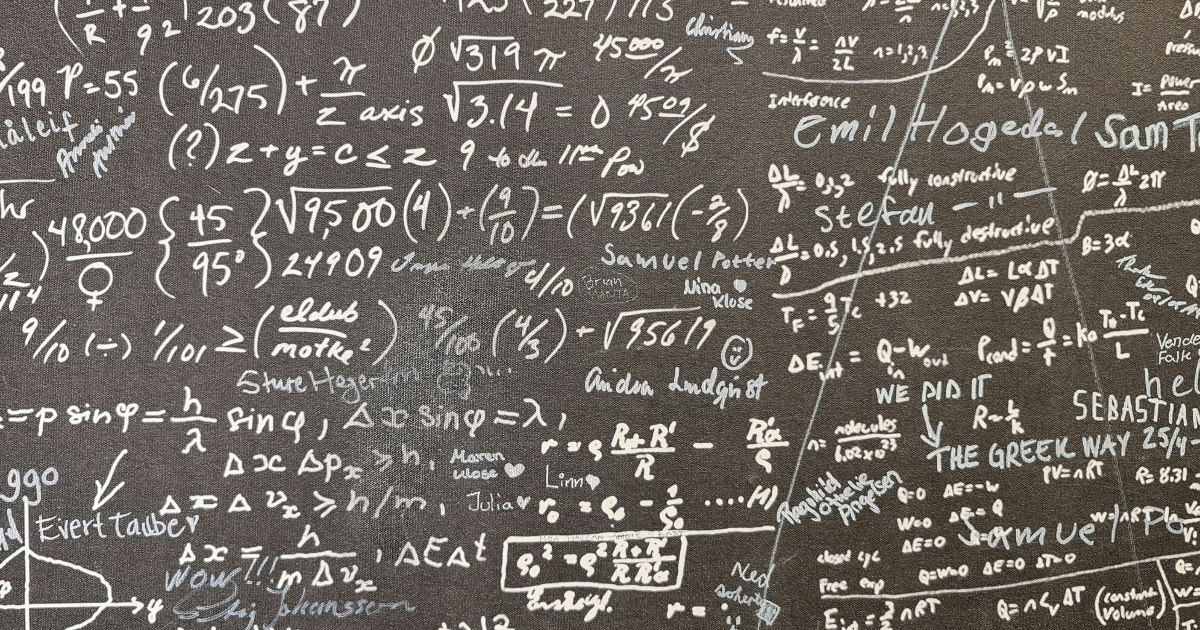

Time pressure, limited information, confusion, fatigue, and mortality salience combine to set the stage for decision-making errors, sometimes with grave consequences. An example is the downing of Iran Air Flight 655 by a missile launched by the USS Vincennes in 1988, resulting in the death of 290 passengers and crew. In a time of heightened tension between the U.S. and Iran, the captain of the Vincennes misidentified the airliner as an incoming hostile aircraft and ordered his crew to shoot it down.

Psychology

fromMedium

1 month agoWhy safe AGI requires an enactive floor and state-space reversibility

Frontier AI systems are simply not reliable enough to operate without human oversight in high-stakes physical environments. The Pentagon's demand was, in structural terms, a demand to eliminate the human's ability to redirect, halt, or override the system. Amodei's refusal was an insistence on maintaining State-Space Reversibility - the architectural commitment to keeping the human in the loop precisely because the system lacks the functional grounding to be trusted outside it.

Artificial intelligence

Artificial intelligence

fromBig Think

1 month agoAI that acts before you ask is the next leap in intelligence

Proactive AI that acts independently, learns in real time, and initiates contact represents the next frontier, moving beyond reactive chatbots and user-directed agents to fundamentally transform human-AI interaction.

Artificial intelligence

fromPsychology Today

2 months agoMind and Machine: A Lethal Cognitive Cocktail

Artificial intelligence is combining with human cognitive vulnerabilities to create an escalating crisis of hybrid intelligence, enabling manipulation through convincing deepfakes and persuasive algorithms.

fromInfoWorld

1 month agoAI agents still need humans to teach them

AI agents need skills - specific procedural knowledge - to perform tasks well, but they can't teach themselves, a new research suggests. The authors of the research have developed a new benchmark, SkillsBench, which evaluates agentic AI performance on 84 tasks across 11 domains including healthcare, manufacturing, cybersecurity and software engineering. The researchers looked at each task under three conditions:

Artificial intelligence

[ Load more ]